Data has taken a significant role in the growth of the digital economy with some of the largest firms in the world relying on data as a core part of their business model. CIOs, in particular, have been leveraging data to draw out information that they can then use to make decisions or guide the CEO.

However, as data production and use increases, so does the proliferation of bad, misleading, and even fraudulent data.

Location data is used by CIOs in a wide range of companies for a variety of reasons. CIOs in the auto industry use location data to understand footfall in and around dealerships in Malaysia, retail CIOs use location data to plan their next expansion, healthcare executives will use it to determine catchment area and plan services, the list goes on.

While CMOs have traditionally used location data for their own campaigns, CIOs use it more and more for their own strategic purposes.

These are just a few examples of how location data is used and also illustrates the importance of having accurate, quality data given that decisions worth many millions of dollars are often based on this data. Location data can be a powerful tool in a CIO’s arsenal, but it is important to have a good understanding of what constitutes good data.

Let us consider two of the main sources of location data there are in the market and what data feed characteristics tend to indicate about data quality.

Bidstream data

Bidstream data is one of the lowest-quality sources of location data, collected from advertising bid requests. Every time you use an app on your smartphone and an advertisement pops up, it routes back data such as device ID, IP address, and more to the ad servers.

The accuracy of bidstream data is weak at best. Given this fact, it is no surprise that this source of location data is not exactly preferred by businesses who use it to drive the intelligence behind decision-making – whether that is where to place their next retail store or to feed Artificial Intelligence tools.

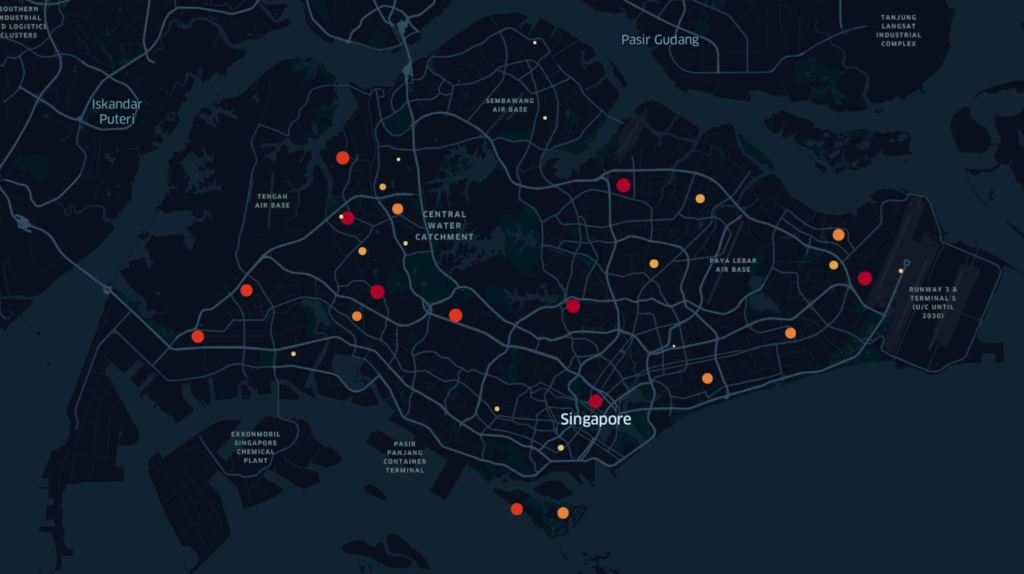

Bidstream data in Singapore showing large numbers of users in what are generally ‘random’locations, including areas of jungle

Bidstream data, though available in large quantities compared to other sources, is an unreliable source of location data. Latitude and Longitude (lat/long) values sourced from bidstream data is most likely to be derived from IP addresses. This manner of obtaining lat/long figures is notoriously inaccurate.

Some database providers claim to be able to convert IP addresses to lat/long coordinates to determine device location, but this is highly unlikely to within any useful accuracy. In the analysis of location data sets, it is not uncommon to identify large amounts of data pointing to the exact same coordinates. This can only mean one thing – extreme inaccuracy.

As you can see in the image above, each dot represents many thousands or users in one location at any one time. While Singapore is indeed densely populated, the likelihood that thousands of people would be present in a forested area or less populated areas in the west is low.

So, what’s going on? The problem stems from the fact that when location database providers cannot find lat/long coordinates for a device, they automatically point it to an often random location – in this instance various residential and non-residential areas in Singapore.

This and similar practices in other countries can result in tens or hundreds of thousands of people appearing to be located in the middle of a park, suburban area, or even jungle!

Mobile GPS data

Which brings us to the question of higher quality sources of location data. GPS is far higher quality and is of the most value to CIOs. The downside is that it is available in less volume and is, unsurprisingly, more expensive. But the old adage is true: you get what you pay for.

Ensuring the supply of the highest quality location data should be a priority for data providers and performing consistent quality checks on data supply chains is a crucial step.

There are a number of red flags for CIOs to look out for. A lack of movement tends to be an indicator of low-quality location data, whereas high-quality data shows lots of movement. Data that shows lots of people at the same coordinate, beyond what is to be reasonably expected, is always a red flag.

Lastly, teleportation, by this we mean the same device appearing in multiple countries or regions within the same 24-hour period.

Understanding what quality data is

Going forward, a key challenge remains educating data buyers on these problems. The reality is that many simply care about volume and metrics like daily active users (DAUs) over deeper quality considerations.

But deep diving into the nature of the data you are purchasing will reveal that it may not be quite up to par with your expectations. Some DAU data feeds may on the surface point to high quality, but in reality is full of low-quality data if you care to look beyond volume and devices.

Bidstream data fits this description – while it usually includes more DAUs, most devices are spotted in stationary positions which make it easy for publishers to sell the data for higher profits.

High quality mobile location data in Singapore. The dots represent fewer numbers and are concentrated in areas of high population density and a clear movement pattern is observed along the roads

Defining fraudulent data can be harder. When is a data set simply low-quality or misleading, and when does it become fraudulent? Intent to mislead or trick seems to be the key consideration here.

Manipulation of the source data, for example, would more likely point to data fraud.

Providers can do this by changing the time stamps by a few seconds, in order to make it seem like a different data set. Alternatively, they may tweak the lat/long coordinates by a few decimal points.

The motivation? Almost always to maximise profits by selling location data known to be low quality. But demand for volume over quality has also made it easier for data fraudsters to go undetected and get away with their tricks.

The role of the CIO is, along with other C-Level leaders, to set and lead the technology strategy for the company – of which Big Data plays an increasingly relevant and important part.

They need to avoid focusing on volume – no amount of data or algorithms can compensate for low quality data – rather in order to ensure the best possible results they should, along with their IT team and CTO, arm themselves with a basic understanding of what good and bad data looks like.