The panic arising from the spread of the coronavirus has in part its root of a lack of a single source of truth. The Internet and social media have made it easy to create misinformation which sows the seeds for mistrust.

I was speaking to a Vietnamese developer who recently launch a website which they claim uses artificial intelligence to sniff out data related to the coronavirus, discern real from fake data points, and publish the result on what they claim is the most truthful current version of the state of the coronavirus or as its officially called today COVID-19.

One benefit of running a small business is that the owner has only one version of the truth to deal with – his. If he lies, he knows he is lying. It is not easy as the organisation gets bigger.

Today most organisations are confused with the amount of data they collect, including multiple versions of the same truth. This is because most organisations have multiple information systems, each of which needs access to data potentially relating to the same entities but viewed from a different angle.

For example, a customer profile is seen differently by finance, purchasing, sales, or marketing.

The single source of truth conundrum became the topic of discussion with FutureCIO spoke to Angel Vina, CEO and founder of Denodo Technologies, who claims that data virtualisation helps create one single view, a single source, of this data.

According to Gartner, data virtualization technology is based on the execution of distributed data management processing, primarily for queries, against multiple heterogeneous data sources, and federation of query results into virtual views.

It provides a layer of abstraction above the physical implementation of data, to simplify querying logic.

Data virtualisation – a C-suite perspective

How does the CIO or CTO explain this mumbo-jumbo to the C-suite leadership?

Vina concedes that different roles may have different approaches of how to take advantage of this technology.

He acknowledges that data is the most important asset in every company.

“So easy access to data and comprehensive governance of the data, security of data assets, and self-service capabilities to empower the business users to use the data for better performance, better operation of whatever business we are dealing with is at the very core of the priorities everywhere,” he commented.

CEO: It is very much about shaping the strategy. How do I make the business more efficient? How I impact my revenue model the most using these advantages, new capabilities that data virtualization brings to the table,” he started.

CTO: Tt is about am I in control of the data assets. Am I applying the right security policies, the right governance policies to my data assets? Are my users using the data in the right way compliant with the regulators?

CFO: It is about savings, efficiency, better performance. Am I taking advantage of these capabilities for faster access to the data with better ROI? That is the most important thing. But it is also a key technology to bridge the technical teams with the business users in organizations, in corporations.

How data virtualisation works

According to Vina, the goal behind data virtualization is to unify dispersed data. Data that may be in cloud repositories, on-prem, sitting with third-party business partners or suppliers, and so on. To unify diversity.

The legacy approach is to move data into new repositories, create data warehouses. These days it is also about data lakes, cloud storage. Also moving data.

He noted that with data virtualization, there is no need to move data. Metadata creates a unified layer across these multiple diverse repositories. He singles this as different from an older approach called data federation, arguably the predecessor to data virtualisation.

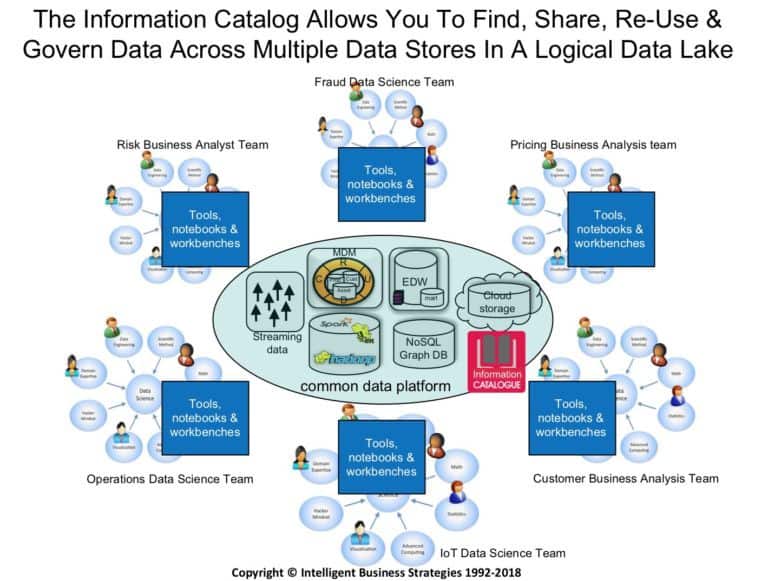

“The role of the data catalog is to discover, profile, tag and classify data, to map it to a business glossary so that people know what the data means. It provides lineage and helps organise data in the data lake making it easy to find. Increasingly the information catalog is being plugged into data preparation tools, data science workbenches and self-service BI tools so that people working in data and analytics projects can easily see across the logical data lake to find the data they need,” He elaborated.

Data virtualisation and governance

On the governance side because this technology is an abstraction that is created between applications and data repositories, data virtualisation becomes the perfect place to introduce data policies in corporation.

“There is a lot that we can do adding governance functions, governance capabilities. Governance that may be shaped with a specific model to a specific vertical not just the capability there agnostically to be used without any pre-models introduced into the platform. Plenty of space to evolve,” he commented.

He opined that data virtualisation will be at the very core of data management solutions for big corporations, and small and medium businesses as well.

Supporting digital transformation

With the mandate that companies and organizations have from their CEOs to bring agility to the business, being flexible to react to changes in business objectives, be more competitive about the other companies in our space and be compliant with new regulation, and create the right setting for innovation in the company.

“All of these objectives start with having good analysis that provides good insights in our customer base, in our product quality, in our service to our customers. The only way to do that is introducing technology that does not really put any obstacles in how fast I can move with my business. It is very much about data usage, data consumption, how can I really bring to the table the data I need and put this data in the hands of the business users as fast as possible and in the way they need it,” he concluded.

In 2017, Gartner declared data catalogs as “the new black in data management and analytics” and now they are recognized as a central technology for data management.

According to the Gartner research report, Augmented Data Catalogs: Now an Enterprise Must-Have for Data and Analytics Leaders, “Demand for data catalogs is soaring as organizations continue to struggle with finding, inventorying and analysing vastly distributed and diverse data assets. Data and analytics leaders must investigate and adopt ML-augmented data catalogs as part of their overall data management solutions strategy.”