Skymizer Taiwan Inc. has unveiled a new AI inference architecture designed to run ultra-large language models (LLMs) on a single card. As enterprises seek alternatives to cloud-based AI inference amid rising costs and growing concerns about data sovereignty, the solution aims to reduce their reliance on large GPU clusters significantly.

“The era of needing superscalar GPU clusters for ultra-large LLMs is over. HyperThought shifts AI from hyperscaler-only complexity to single-card simplicity for every enterprise,” William Wei, chief marketing officer, Skymizer, said.

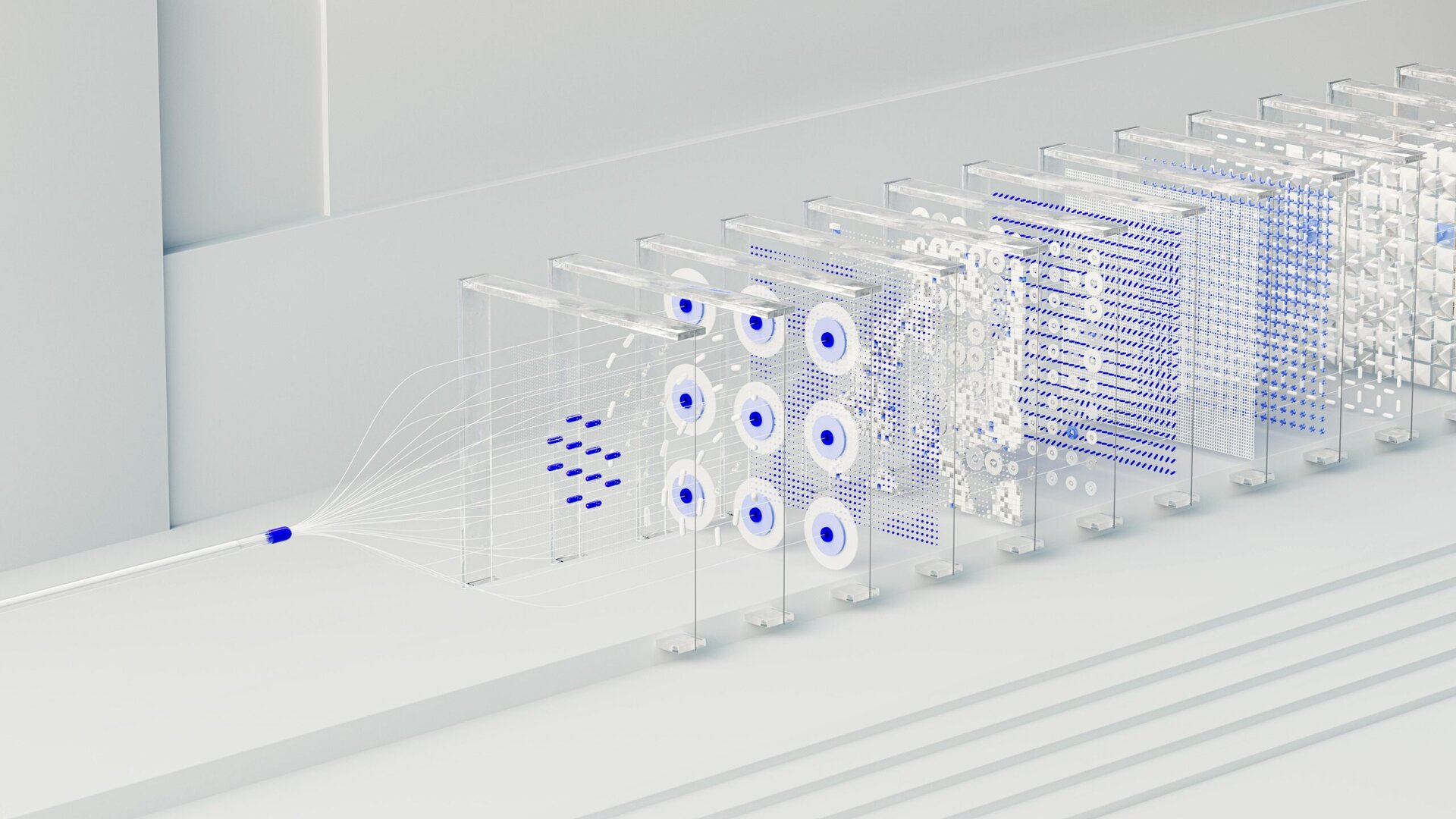

AI inference architecture

The company showcased its HTX301 inference chip, a key component built on Skymizer’s HyperThoughtplatform. A single PCIe card with six HTX301 chips and 384GB of memory can locally run inference on models with up to 700 billion parameters, consuming approximately 240 watts.

The architecture aims to enable enterprises to deploy AI workloads on-premises, enhancing operational control and data privacy, while reducing dependence on large GPU clusters.

Designed for scalability, HyperThought supports deployment of AI models ranging from 4 billion to 700 billion parameters across various form factors.

Particularly suited for agentic AI workflows, HTX301 enables applications across financial services, healthcare, manufacturing, legal services, government and defence, retail, software engineering, and semiconductor design.

The platform uses a prefill/decode (P/D) disaggregation strategy, separating the compute-intensive prompt-processing phase from the memory-intensive token-generation phase. This allows existing GPUs to handle prefill tasks while HTX301 cards optimise decode-heavy inference.

“Purpose-built decode hardware paired with an intelligent software stack that orchestrates every inference workload — that’s how you disaggregate P/D at scale,” said Luba Tang, chief technology officer, Skymizer.